Using Synchronous Q&A Software to Promote Student-Staff Interactions

Dr Alex Squires and Prof Dan Rigby

University of Manchester

Published March 2023

This post expands on Wilson’s (2022) experience with synchronous Question and Answer (Q&A) software, by presenting further evidence on student use and assessment of a real-time, synchronous, online discussion tool (Slido). One key difference between Wilson’s experience of using Q&A and ours was the addition of a second instructor to respond to questions in real time, to prevent the lecture being interrupted. We assess the introduction of Slido using different data types (quantitative, qualitative) and data sources (usage data, survey responses).

Synchronous Discussion Forum

The COVID-19 pandemic required many UK universities to take a hybrid (Hyflex) approach to teaching. The presence in synchronous classes of both online (watching a live feed) and on-campus students prompted new methods and new tools to be adopted. Equity concerns required online students to be able to ask questions and interact with the instructor.

To this end, in 2021/22 at University of Manchester, we trialled a discussion tool to allow online students to engage, in real time, with teaching staff during lectures. The module was a first year Microeconomics course with over 1000 students from a variety of degree programmes. Although the discussion tool was aimed at online students this option was also taken up by students present in the classroom. The tool we adopted and evaluated was Slido (Slido, Slido.com).

Slido was then used on a first year introductory Mathematics module, in 2022/23. This is a smaller module with only 225 students. Students who take the introductory Mathematics module are also required to take the Microeconomics module so the two groups are comparable. In both modules this synchronous Q&A software was used in addition to an asynchronous discussion board available to students. This meant that students still had a platform to ask questions after the class and so the focus of Slido was on clarifying the lecture content as it was delivered.

Slido is one of several online tools which allow users to ask questions and take part in polls and quizzes. In the case of Slido students do not need to register and can engage via a website or an app. At the start of every lecture students were given a link to Slido, the students could then ask questions anonymously and vote for the questions they considered most important. This option was available throughout the lecture. The second instructor was present in the lecture to monitor Slido, respond to questions and, if necessary, alert the lecturer to a query. The role of the second instructor was performed by a tutor/teaching assistant on each module.

Slido records data on the number of users and the number of questions asked in each session. These data were augmented by survey results about students’ use and assessment of Slido.

"Although prompted by a desire to allow remote students to ask questions, [...] students present in the lecture theatre also used the tool."

Slido Evaluation: Usage

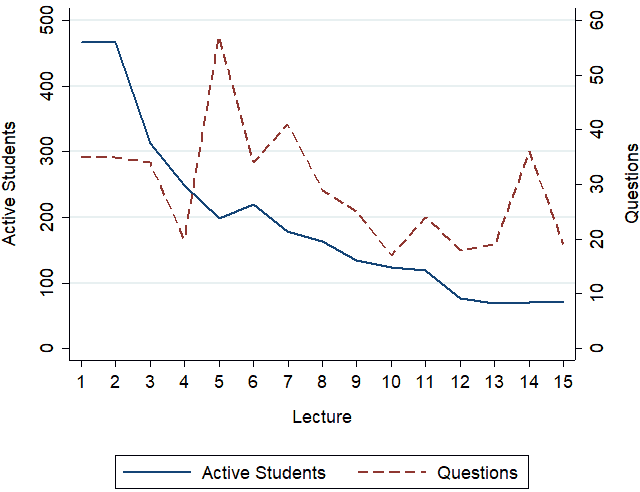

Figure 1 shows the number of people joining each Slido session (Active Students) and the number of posts (typically questions) posted per session for Microeconomics I.

Figure 1: Number of Slido Logins and Posts per Lecture

It is evident from Figure 1 that the number of users visiting each lecture’s Slido page declined over the course of the semester from an initial 466 with a mean number of log ins per lecture of 194.

The number of questions posted per (50 minute) lecture is also shown in Figure 1 (right hand Y-axis). The decline in the number of questions posted was much less marked than in the number of visitors to each Slido page. A total of 425 questions were asked over the semester, with a mean of 28 questions posted per lecture. This may indicate that students were using the software only if they had a question and were not routinely monitoring questions/responses.

Slido Evaluation: Student Assessment

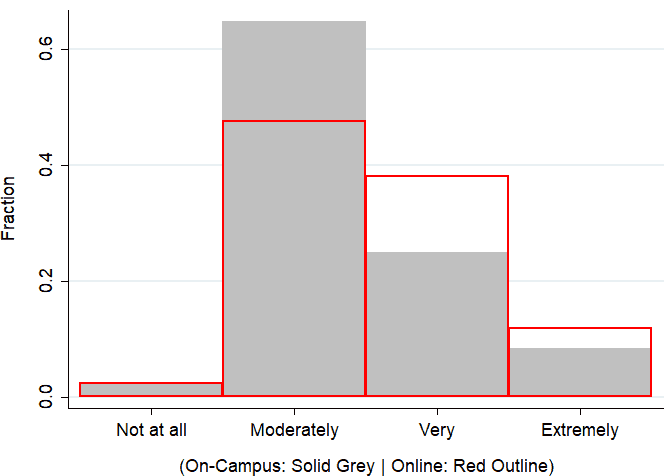

In an end of semester survey we asked students to rate the usefulness of Slido. 175 of them responded. These data are displayed in aggregate, and by online/on-campus status, in Table 1 and Figure 2.

Table 1. Student Evaluation of Slido Usefulness

| Cohort | Not at all | Moderately | Very | Extremely | |

|---|---|---|---|---|---|

| All Students | N | 4 | 106 | 49 | 16 |

| % | 2 | 61 | 28 | 9 | |

| On-Campus | N | 3 | 86 | 33 | 11 |

| % | 2.3 | 64.7 | 24.8 | 8.3 | |

| Online | N | 1 | 20 | 16 | 5 |

| % | 2.4 | 47.6 | 38.1 | 11.9 |

Figure 2: Perceptions of Usefulness of Slido, by Online/On-Campus Status

Notes: N = 175. “On Campus” students are those who had attended a minimum of one lecture in person. “Online” students are those who attended zero lectures in person. Online N = 42, On Campus N = 133

37% of students indicated that Slido was “very” or “extremely” useful, and 61% reported it as moderately useful; only 2% indicated it was of no use. The online students found the Slido more useful than the on-campus students. Most of the online students found the Q&A software “Very” or “Extremely” useful whereas most on-campus students found it “Moderately” useful. This indicates that remote students are more likely to find the introduction of real-time online communication platforms more useful than on-campus students. Generally, the use of the Q&A survey was positively viewed by users. However we must acknowledge the 135 respondents to the survey who had not used Slido and were therefore not able to rate the usefulness of the Q&A tool.

Student Comments

Students made further, unprompted, comments on the use of Slido in their end of term course evaluation.

- “[The second instructor] was really helpful in responding questions on the Zoom meeting and on slido. He was really quick and he provided accurate answers which helped even more in clarifying things from [the] lecture”

- “[The second instructor] was able to successfully.. answer questions on Economics both in Zoom and Slido. His responses were always sophisticated, and very much appreciated.”

- “The [second instructor], responding [to] questions while [the lecturer] gave his lectures was really effective. Any doubts we had were cleared instantly.”

The comments on Slido are consistent with the responses in Figure 2.

Further Usage

Given the levels of use, and ex-post assessments, of Slido in Microeconomics 1, it was trialled in a first year Introductory Mathematics module in the following academic year. The Introductory Mathematics module has fewer students (225) but they all take Microeconomics 1 so the two groups are comparable. The approach was similar: a second instructor present in each lecture monitoring Slido, but this time a unique link and QR code for each week rather than per session. Table 2 below shows the questions asked in the Introductory Mathematics module:

Table 2. Use of Slido in Introductory Mathematics Module

| Week | Questions | About Content |

|---|---|---|

| 1 | 29 | 8 |

| 2 | 8 | 8 |

| 3 | 3 | 3 |

| 4 | 0 | 0 |

The questions asked in Week 1 were predominantly about course organisation and admin matters, with only 8 questions about course content across the week’s two lectures. After Week 4 in which the two lectures yielded no questions the use of Slido was ceased (as a poor use of the time of the additional member of academic staff). The number of students joining the Q&A was also below 15. Students did seem comfortable asking questions during the lecture time so the trial in Introductory Mathematics was ended.

Conclusion

Our experience was markedly different in the 2 first year modules in which it was trialled. The student cohorts are similar (those on the Mathematics unit are a subset of those on the Microeconomics unit) which may suggest that the nature of the content is the source of the difference.

The experience of using Slido in Microeconomics I was positive. Although prompted by a desire to allow remote students to ask questions, our experience was that students present in the lecture theatre also used the tool – in part because asking a question in a lecture of several hundred students is intimidating. As well as resolving student queries, Slido assisted instructors in gauging the extent and nature of student queries and respond accordingly. For example, the number of questions asked spiked in Lecture 5 (see Figure 1) which helped alert us to common difficulties students had in understanding some concepts used in the lecture – and addressing those before the next lecture.

The experience of using Slido in Intro Mathematics was not positive; the decline in usage prompted abandonment after 4 weeks of lectures. One explanation would be that the students found the material less challenging in the maths unit than the Micro unit – but this is not supported by grades and student feedback. Alternatively, the smaller student numbers in the Introductory Mathematics module may also have meant students felt more comfortable asking questions aloud rather than relying on the Q&A software.

A further potential explanation is that the Q&A software does not provide as much benefit to the students for more quantitative subjects where questions may be of a more technical nature. It is perhaps because the nature of the queries and confusion of students in Maths were less conducive to quickly typed questions than is the case for Economics (“why does marginal utility decline?”, “what does Ricardo mean by rent?”) or perhaps students are so focussed on writing down the maths content accurately that they have insufficient time to reflect on it and synthesise any confusion in real time – making asynchronous tools (such as Piazza) more useful. The experience of using Slido in the Introductory Mathematics module suggests that there may be more benefit to using Q&A software in less quantitative modules.

Any evaluation of Slido, or other synchronous Q&A software, should take account of the (opportunity) cost of its use. Having an instructor deliver content whilst also monitoring Slido would likely interrupt the flow of the lecture and be impractical with a sufficiently large number of questions. Using a second instructor to monitor Slido worked well in one unit – but is a significant commitment of staff time and raises the question of whether that time could be better used in a different way – for example in open-door student surgeries. Our experience was that Slido usage did not justify the resources required in the Mathematics unit, but did in Microeconomics. This assessment was informed by the recurring experience that most students are reluctant to utilise existing ‘office hours’, hence there does seem value in providing real-time responses at the time when students have their questions – rather than hoping they will seek staff out to resolve them subsequently.

References

Slido. Available at: https://www.slido.com/ [Accessed September 12, 2022].

Wilson, C. 2022. "Using Digital Tools for Live Q+A within Teaching Sessions" [Online]. The Economics Network. https://doi.org/10.53593/n3541s [Accessed 15/02/2023]

↑ Top